I have a project that I am working on where a popup window will appear asking for some parameters and then it will kick off a process which may take a while to return. The information on creating a new window, and centering it isn’t readily available, so I thought I’d do a posting on some of the testing code that I have written which will end up added to the project.

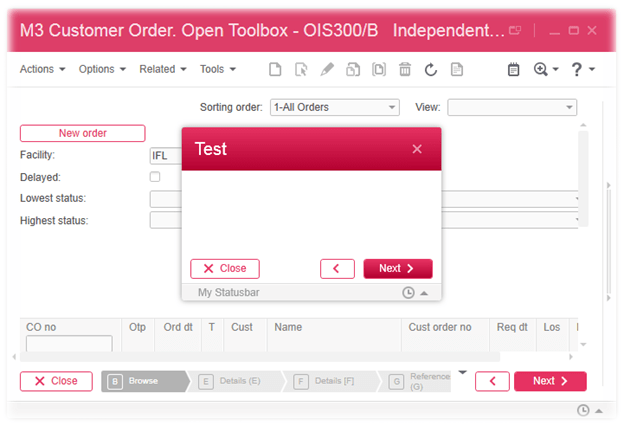

So, first off, I want to spawn a window that is in the Smart Office Style – this needs to be a modaldialog box – the user should be bound and focused only on MY script 😉

Then I want to add a standard status bar so we can provide updates on the progress of our long running process. I wanted the Close/Previous and Next buttons and I wanted to center the pop up on the existing window it was launched from.

You’d think that none of these things would be terribly difficult 🙂

Retrieving the Parent Window Position

Access to the window comes via the Host property on the controller object that is passed to the Init() method. It doesn’t give us a Window object, nor does it given us the EmbeddedHostWindow object, however it does give us access to a number of functions which will allow us to get what we need.

We can retrieve our Windows Width and Height directly from controller.Host.Width and controller.Host.Height

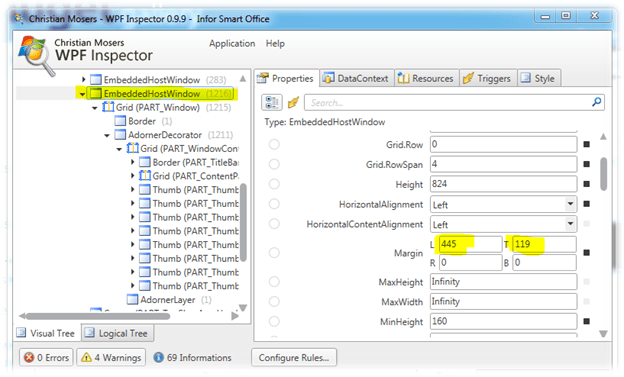

However getting the actual left and top positions of the window proved to be more challenging. I ended up using a tool called WPF Inspector and after some investigation I discovered that the position is determined by the Margins, not the Left and Top properties

But this presented another issue. None of the Host properties provide a direct path to the EmbeddedHostWindow so we can then retrieve the Margins.

It turned out that the controller.Host.VisualElement.Name property was “PART_Window” that we can see in the screenshot above – this meant that the VisualElement was a child of the EmbeddedHostWindow

So, we can use the VisualTreeHelper.GetParent() method to go up a level. As we are probably entering in to a world that is subject to change, in my code I actually run a loop iterating up the tree until I find an EmbeddedHostWindow.

Now that we have the EmbeddedHostWindow we can extract the margin values and save those for later analysis.

If you have a better way to achieve the same results, feel free to use the comments section!

Setting Our Child Windows Position

Now really, how difficult…oh wait, we’ve asked that question already 🙂

So we use the controller.Host.CreateDialog() function to create our new window, and we assign values to the .Left and .Top properties – however when we do a .ShowDialog() the values are totally ignored. <sigh>…

We can change this by subscribing to the Window.Loaded() event, and then set our .Left and .Top values there.

StatusBar and the Standard Close, Previous, Next buttons

These ones I’ve already done most of the leg work on in my SDK projects. The StatusBar is under the Mango.UI.Controls namespace and the standard Close, Previous and Next buttons are from the Mango.DesignSystem namespace.

The code will give you an idea of how to use them. I did have to hard-code the height of the Previous – I couldn’t find anything that would define the standard width when it didn’t have an icon so I had a look at the width of one of the existing previous buttons.

The Code

import System;

import System.Windows;

import System.Windows.Controls;

import MForms;

import Mango.UI.Controls;

import System.Windows.Media;

import Mango.DesignSystem;

import Mango.UI;

package MForms.JScript

{

class testStatusBar

{

var gdbgDebug;

var gctController;

// this is the host object that represents our child window

var gihChildWindowInstanceHost;

var gdblParentWindowLeft : double = 700;

var gdblParentWindowTop : double = 200;

var gdblParentWindowWidth : double = 0;

var gdblParentWindowHeight : double = 0;

var gtibCloseButton : ThemeIconButton;

var gtibNextButton : ThemeIconButton;

var gtibPreviousButton : ThemeIconButton;

public function Init(element: Object, args: Object, controller : Object, debug : Object)

{

var content : Object = controller.RenderEngine.Content;

gdbgDebug = debug;

gctController = controller;

gdblParentWindowWidth = gctController.Host.Width;

gdblParentWindowHeight = gctController.Host.Height;

try

{

// how well supported this is, is well questionable

// so lets wrap it in a try..catch() so we can

// always go back to the default position values

if(null != gctController.Host.VisualElement)

{

// get the parent of the visual element, in ISO 10.1.0.19 this ends

// up being what we want, however this may change

var actualWindow = VisualTreeHelper.GetParent(gctController.Host.VisualElement);

// lets iterate up the tree until we have the EmbeddedHostWindow

while((null != actualWindow) && (actualWindow.GetType().ToString() != "Mango.UI.Services.EmbeddedHostWindow"))

{

actualWindow = VisualTreeHelper.GetParent(actualWindow);

}

if(null != actualWindow)

{

// we'll cast to the actual real object

var ehwEmbeddedHostWindow : Mango.UI.Services.EmbeddedHostWindow = actualWindow;

gdbgDebug.WriteLine("actualWindow = " + ehwEmbeddedHostWindow.GetType());

// verify it has a margin property (it uses the margin and now the

// left,top)

if(null != ehwEmbeddedHostWindow.Margin)

{

gdbgDebug.WriteLine("ehwEmbeddedHostWindow.Margin.Left = " + ehwEmbeddedHostWindow.Margin.Left);

gdbgDebug.WriteLine("ehwEmbeddedHostWindow.Margin.Top = " + ehwEmbeddedHostWindow.Margin.Top);

// make sure that we actually have values...I'm deliberately using >= 1 as we are using

// doubles and we all know about doubles!

if((ehwEmbeddedHostWindow.Margin.Left >= 1) && (ehwEmbeddedHostWindow.Margin.Top >= 1))

{

// save our position for later manipulation

gdblParentWindowLeft = ehwEmbeddedHostWindow.Margin.Left;

gdblParentWindowTop = ehwEmbeddedHostWindow.Margin.Top;

}

}

}

}

}

catch(ex)

{

gdbgDebug.WriteLine("Failed to retrieve the parent windows positions, we will rely on the defaults. " + ex);

}

gdbgDebug.WriteLine("Width = " + gctController.Host.Width);

gdbgDebug.WriteLine("Height = " + gctController.Host.Height);

createSimulateWindow("Test");

}

// create our window, we're also going to create a statusbar

// we want to be able to position our window rather than

// rely on the rather random default windows positioning

private function createSimulateWindow(astrWindowTitle : String)

{

// first we need to create our Smart Office window

gihChildWindowInstanceHost = gctController.Host.CreateDialog();

gihChildWindowInstanceHost.Width = 400;

gihChildWindowInstanceHost.Height = 200;

// set the title that appears

gihChildWindowInstanceHost.HostTitle = astrWindowTitle;

// set up a grid which will be used to display our content

// in the body of the email

var gdGrid : Grid = new Grid();

gdGrid.MinWidth = 300;

gdGrid.MinHeight = 150;

gdGrid.VerticalAlignment = VerticalAlignment.Stretch;

gdGrid.HorizontalAlignment = HorizontalAlignment.Stretch;

// originally I had been using WindowContent - which wiped out the

// title, then I discovered the HostContent property

// which is the body of the window

gihChildWindowInstanceHost.HostContent = gdGrid;

// we want a status bar so we look like a REAL window

var stsBar : StatusBar = new StatusBar();

stsBar.HorizontalAlignment = HorizontalAlignment.Stretch;

stsBar.VerticalAlignment = VerticalAlignment.Bottom;

// add the Status bar to the grid

gdGrid.Children.Add(stsBar);

// add a message to the status bar, there is also a method to

// make the message timeout and disappear

stsBar.AddMessage("My Statusbar");

// add the standard Next/Previous/Close buttons

gtibNextButton = new ThemeIconButton();

gtibNextButton.IconName = "Next";

gtibNextButton.Content = "Next";

gtibNextButton.IconAlignment= HorizontalAlignment.Right;

gtibNextButton.IsPrimary = true;

gtibNextButton.VerticalAlignment = VerticalAlignment.Bottom;

gtibNextButton.HorizontalAlignment = HorizontalAlignment.Right;

gtibNextButton.Margin = new Thickness(0, 0, Margins.Medium, Margins.Small + 20);

gdGrid.Children.Add(gtibNextButton);

var gtibPreviousButton : ThemeIconButton = new ThemeIconButton();

gtibPreviousButton.IconName = "Previous";

gtibPreviousButton.IconAlignment= HorizontalAlignment.Right;

gtibPreviousButton.IsPrimary = false;

gtibPreviousButton.VerticalAlignment = VerticalAlignment.Bottom;

gtibPreviousButton.HorizontalAlignment = HorizontalAlignment.Right;

gtibPreviousButton.Margin = new Thickness(0, 0, 80 + (Margins.Medium*2), Margins.Small + 20);

// I had to retrieve this value from an existing previous control

gtibPreviousButton.Width = 40;

gdGrid.Children.Add(gtibPreviousButton);

gtibCloseButton = new ThemeIconButton();

gtibCloseButton.IconName = "Close";

gtibCloseButton.Content = "Close";

gtibCloseButton.IconAlignment= HorizontalAlignment.Left;

gtibCloseButton.IsPrimary = false;

gtibCloseButton.VerticalAlignment = VerticalAlignment.Bottom;

gtibCloseButton.HorizontalAlignment = HorizontalAlignment.Left;

gtibCloseButton.Margin = new Thickness(Margins.Medium, 0, 0, Margins.Small + 20);

gdGrid.Children.Add(gtibCloseButton);

gtibNextButton.add_Click(OnNextClicked);

gtibCloseButton.add_Click(OnCloseClicked);

gtibPreviousButton.add_Click(OnCloseClicked);

// add the Requested Event so we can clean up our script

gctController.add_Requested(OnRequested);

// because we want to position our window

// we need to modify the Left and Top properties

// when we get the Loaded event

gihChildWindowInstanceHost.add_Loaded(OnLoaded);

// finally, show our dialog box

gihChildWindowInstanceHost.ShowDialog();

}

private function OnCloseClicked(sender : Object, e : RoutedEventArgs)

{

gihChildWindowInstanceHost.Close();

ConfirmDialog.ShowInformationDialog("Close/Previous Clicked");

}

private function OnNextClicked(sender : Object, e : RoutedEventArgs)

{

ConfirmDialog.ShowInformationDialog("Next Clicked");

}

public function OnRequested(sender : Object, e : RequestEventArgs)

{

// be a good Smart Office Citizen and remove our requests

gtibPreviousButton.remove_Click(OnCloseClicked);

gtibCloseButton.remove_Click(OnCloseClicked);

gtibNextButton.remove_Click(OnNextClicked);

gihChildWindowInstanceHost.remove_Loaded(OnLoaded);

gctController.remove_Requested(OnRequested);

}

public function OnLoaded(sender : Object, e : RoutedEventArgs)

{

// here we are going to calculate the middle of the parent window

// that is assuming that our own window isn't wider than the parent

if(gdblParentWindowWidth > gihChildWindowInstanceHost.Width)

{

gdblParentWindowLeft += ((gdblParentWindowWidth - gihChildWindowInstanceHost.Width) / 2);

}

// here we are going to calculate the middle of the parent window

// that is assuming that our own window isn't higher than the parent

if(gdblParentWindowHeight > gihChildWindowInstanceHost.Height)

{

gdblParentWindowTop += ((gdblParentWindowHeight - gihChildWindowInstanceHost.Height) / 2);

}

// now set the specific location on the screen

gihChildWindowInstanceHost.Left = gdblParentWindowLeft;

gihChildWindowInstanceHost.Top = gdblParentWindowTop;

}

}

}

Hi. Thank you for your code 🙂

Can we access to Show History of the statusBar (with clic like a standard MFORMS) ?

Hi can u say me..how to resize button fontsize..?

Take a look at the FontSize property on the Button control.

https://msdn.microsoft.com/en-us/library/system.windows.controls.button(v=vs.110).aspx

Cheers,

Scott